Marketing evaluation: What are you doing wrong and how can you do it better?

Martech is on the increase, with a recent Garner report stating that 621 marketing leaders will increase their spend on marketing technology by 32% in 2018, taking a total of 29% of the total marketing budget. A proportion of this will be on marketing evaluation and analytical platforms but, are they any good?

There is a myriad of approaches to evaluate media efficiency, all having their pros and cons. Through this article, we are going to explore a few approaches and hopefully help you get the most from your analytics providers!

Evaluation?

“Evaluation” can mean different things to different people. In the simplest case, a successful campaign can be described as a campaign where more exposure was achieved than planned (or paid for). This brings us to our first evaluation methodology…simply the value or measure of exposure. Needless to say, this is a horrible way to evaluate media. By just knowing what was achieved, whether it impacts OTS, Facebook likes or YouTube views, is utterly useless. In fact, the International Association for Measurement and Evaluation of Communication have started a “global initiative to eradicate fully the use of Advertising Equivalency Value (AVE) and all of its derivatives as metrics”. No matter how you dress your metrics up, if you are looking at anything other than concrete impacts on business outcomes (i.e. sales, footfall, quotes, registrations, etc.), you are not truly evaluating media.

Now that we have established what true evaluation entails, lets dig deeper into the various (non-media value) methodologies out there.

Lift (AKA A/B Testing)

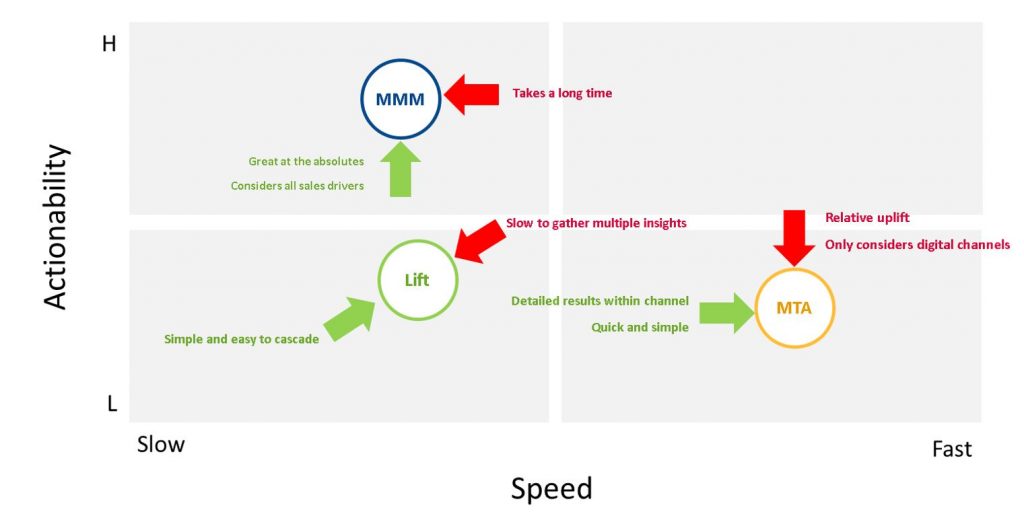

Lift analysis uses simple analytics to determine the incremental effect of a ‘test’ versus a ‘control’. This is great if you have a few hundred years to build up a suite of actions you want to test, deploy the experiments separately and then perfectly pick your test and controls… In addition to that, Lift only focuses on the short term and struggles to pull out any longer effects of activities. But on the plus side, it’s simple: relatively straightforward to set up and analyse, as well as to communicate.

Track-it!

Unfortunately, some marketeers, are still stuck in the 1990’s and 2000’s where “bespoke phone numbers” on various bits of media (e.g. email, door drops, TV, radio, press, etc) were used to evaluate media efficiency. This is the first, most basic method of “true” evaluation. Last touch attribution. It’s a popular way of looking at the world as it is absurdly simple and easy to understand but is also one of the worst “true” evaluation methodologies out there. An analogy for this would be to assume someone got to your office using the bus, because they stepped off a bus at your front door. What we ignore here is that before getting the bus, the Uber driver took them to the airport, the plane flew them across the Atlantic, the tube took them to the centre of town where they finally walked to the bus stop to get on the bus; at least you’re acknowledging the bus they took at the end!

Multi-track it!

Of course, this “track-it!” approach can be executed to the n-th degree online, so we’ve ended up with a ridiculous over reliance on multi-touch-attribution. Unless all your advertising money goes online and you only ever sell anything online, this is a fine way of evaluating media. MTA utilises pathways derived from cookie data to calculate a channel’s contribution to a sale or a conversion. This moves beyond last click (so now we at least acknowledge that something other than the bus played a role in the journey); and seeks to attribute a sale back to each touchpoint along the pathway using a probabilistic based methodology. Please, beware of MTA providers who just use straight factors across each pathway (AKA attribution rules) – this is not a modelled approach but a simulated one and assumes the assumptions made in setting up the rules are reflective of reality. MTA can deliver a wealth of detailed information, specifically within channel (think PPC by keyword, or Display by creative/platform). Where MTA struggles is accuracy: its premise is built on all sales and conversions coming from a digital touchpoint – this clearly isn’t realistic as there are other factors affecting sales such as brand equity, pricing, seasonality, offline media, etc. The other drawback is that it can only focus on the short term – brand building channels such as YouTube and Facebook will tend to be underrepresented.

MMM (Marketing mix modelling) it!

MMM practitioners build econometric models based on historical data, usually weekly or daily, to (statistically robustly) determine the true incremental impact of all factors which influence sales, such as media, promotions, pricing, economy, seasonality and competitor, all at the same time. MMM is great at absolute impact evaluation, as it not only considers and models all factors that affect sales, but can also determine the true incremental effects of intermediary channels such as PPC and email. This gives us a great overview of where sales growth is coming from, whilst also delivering accurate ROI channels by channel. So, what’s the down side? Well, MMM is not so good at providing detail within channel – i.e. PPC by keyword, Display by platform, etc. This is because there are only so many factors the MMM model can consider, meaning within channel impacts becomes difficult. Traditional MMM is sometimes a slow and painstaking process – it takes a long time to get to the answer as the data needs to be ingested, cleaned, analysed and reported back on.

So, what’s the answer?

Most providers have taken the obvious short cut and bluntly fused together MTA with MMM by just using scaling factors – i.e. you get measure of X for channel A from MMM, and a measure Y for channel A from MTA, then just use a factor of X/Y to get to the detail underneath channel A. This is better than using MTA on its own, but it doesn’t allow for certain detail within Channel A to make an impact. For example, there may be one keyword group within PPC that is having a really positive incremental effect on sales versus the rest of PPC, whereas this blunt tack-on solution wouldn’t allow credit for it.

Ideally what you need is a statistical method that harnesses all of the positives of the above-mentioned statistical techniques, but without any of the pitfalls. The so called “sweet spot”, as you can see below delivering a high level of detail with accuracy.

This is not as far away as you think – modern machine learning approaches can now stitch these 3 disparate techniques together (and can also include others such as TV attribution, Direct Response tracking, etc.) and model them dynamically in a statistically robust way. This means not only do you get the absolutes, but you can also model the detail underneath in a dynamic way to reach a full, detailed and accurate solution.

A unified solution

At Brightblue we have developed just such a solution, building a statistical framework across MTA, MMM and Lift using modern machine learning techniques. This not only gives a total, accurate and detailed view of marketing investments but it also reduces the need for our clients to manage multiple tools or pieces of analysis.

As a result, this reduces time spent on disparate modelling solutions, provides a single view on ROI (so that we compare apples to apples, so to speak) and allows for much greater scope for profit gains through optimising media.

Get in touch if you would like to find out more and stop wasting your time on outdated methodologies and providers!

Brightblue Consulting are a London based consultancy which help businesses drive incremental profit from their data. We provide predictive analytics that enable clients to make informed decisions based on data and industry knowledge. Through Market Mix Modelling, a strand of Econometrics, Brightblue has a proven track record showing a 30% improvement in marketing Return on Investment for clients’ spend. If you are interested to find out more please contact us through email by clicking here and one of our consultants will get back to you shortly.

Sources: https://amecorg.com/say-no-to-aves/